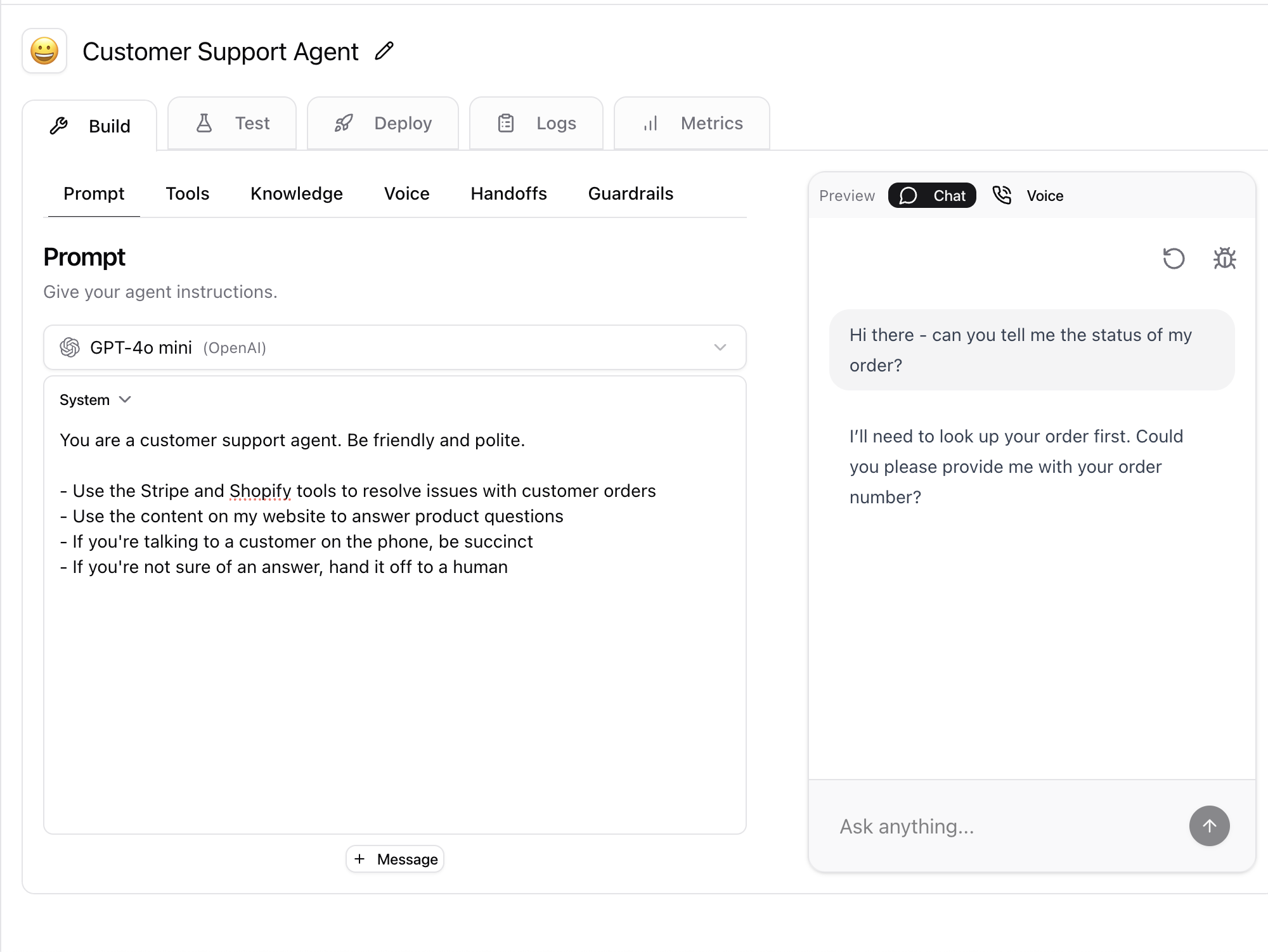

Model

The Model is underling Large Language Model (LLM) that will be used to power the agent. You can select between models from OpenAI, Anthropic, or Google. We recommend starting with OpenAI’s GPT-4o-mini. It’s cheap and fast. If you’re looking for a more advanced model, you should use OpenAI’s GPT-4o or o3-mini.Prompts

The Prompts are the instructions that will be used to define what the agent does. Think of the prompt like giving the agent a job description and also a personality. There are three types of prompts:- System Prompt: This is the initial prompt that will be used to guide the agent.

- User Prompt: The messages end-users will send to the agent

- Assistant Prompt: The responses from the agent

How do you avoid agent hallucinations? A good rule of thumb is to be more specific than less. If you can imagine a scenario where the agent could interpret your instructions in a way that is not what you intended, then you should be more specific.

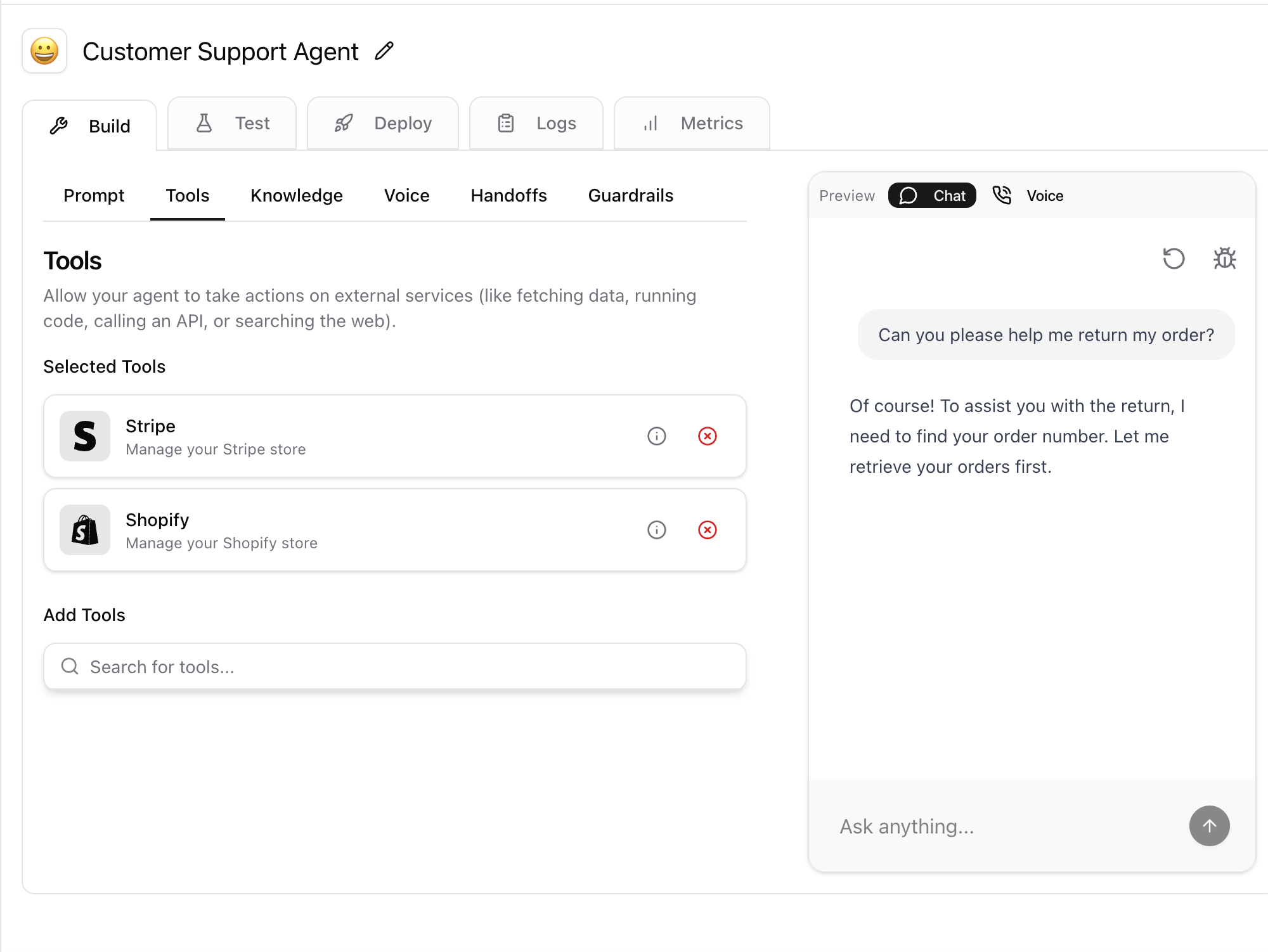

Tools

Tools are the actions that the agent can perform. Tools are bundled up into packages called “Toolkits”. Examples of Toolkits include “Gmail”, “Salesforce”, “Web Search”, and “Zendesk”. For example, the Gmail Toolkit has a tool for reading emails, a tool for sending emails, a tool for adding a label to an email etc. The agent will decide which Toolkit to use based on the user’s request. For example, if the user asks the agent to send an email, the agent will use the Gmail Toolkit. Tools are what make agents “really powerful”. Without tools, the agent would be a “dumb” AI that can only answer questions based on the prompts. With tools, the agent can interact with the real world and perform actions on your behalf.

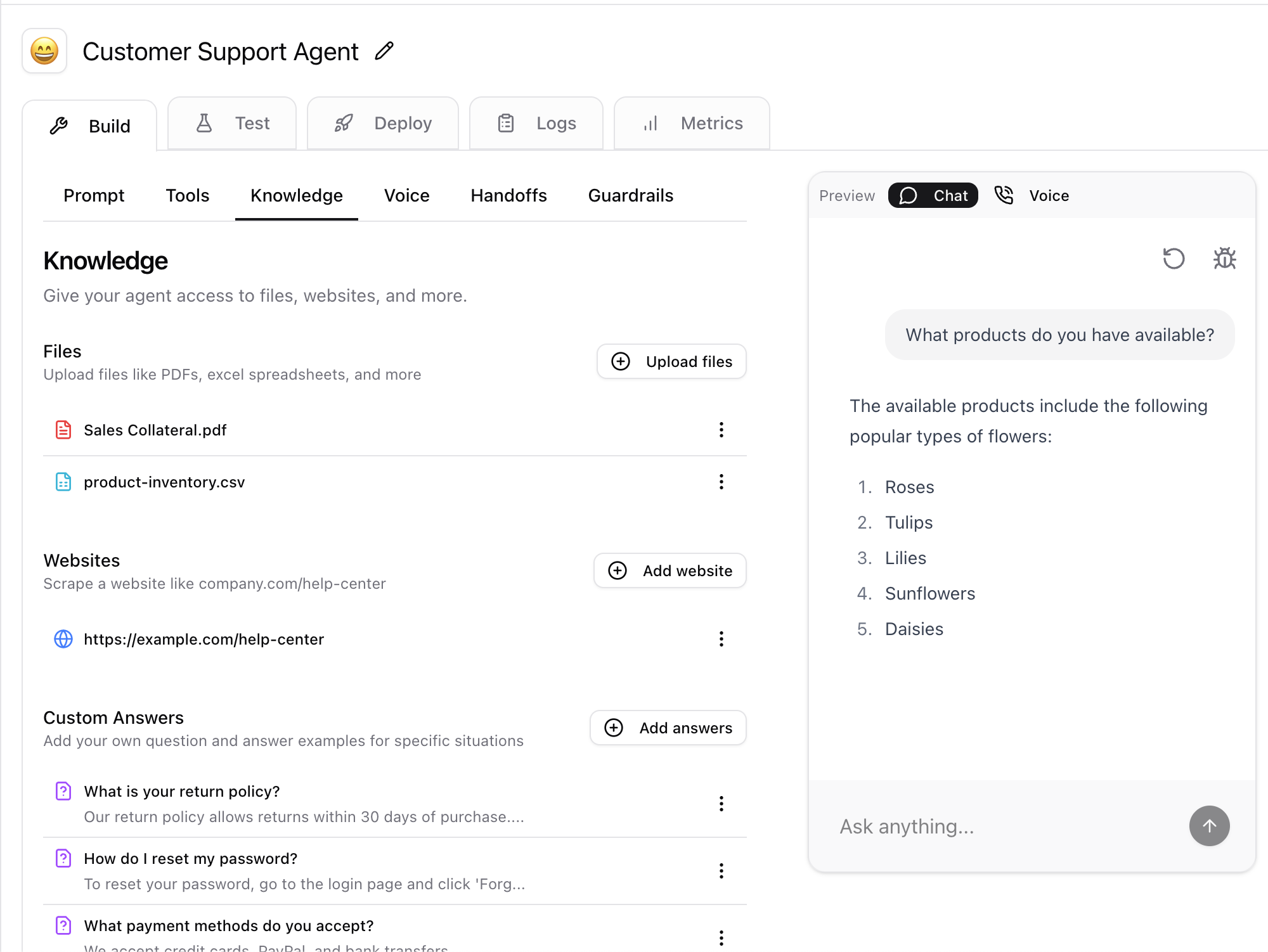

Knowledge

Knowledge defines what the agent knows. “Knowledge” is actually a tool, however, it’s such an advanced and commonly used tool that we separate it out. You can give the agent knowledge by uploading documents (like powerpoints, pdfs, etc), URLs (like your company’s help center or a a company wiki), or even create your own content. The agent will use the knowledge to answer specific user questions. For example, if you are building a customer support agent, you can upload your product manuals, sales materials, or even your company’s policies. The agent will use the knowledge to answer these specific questions.

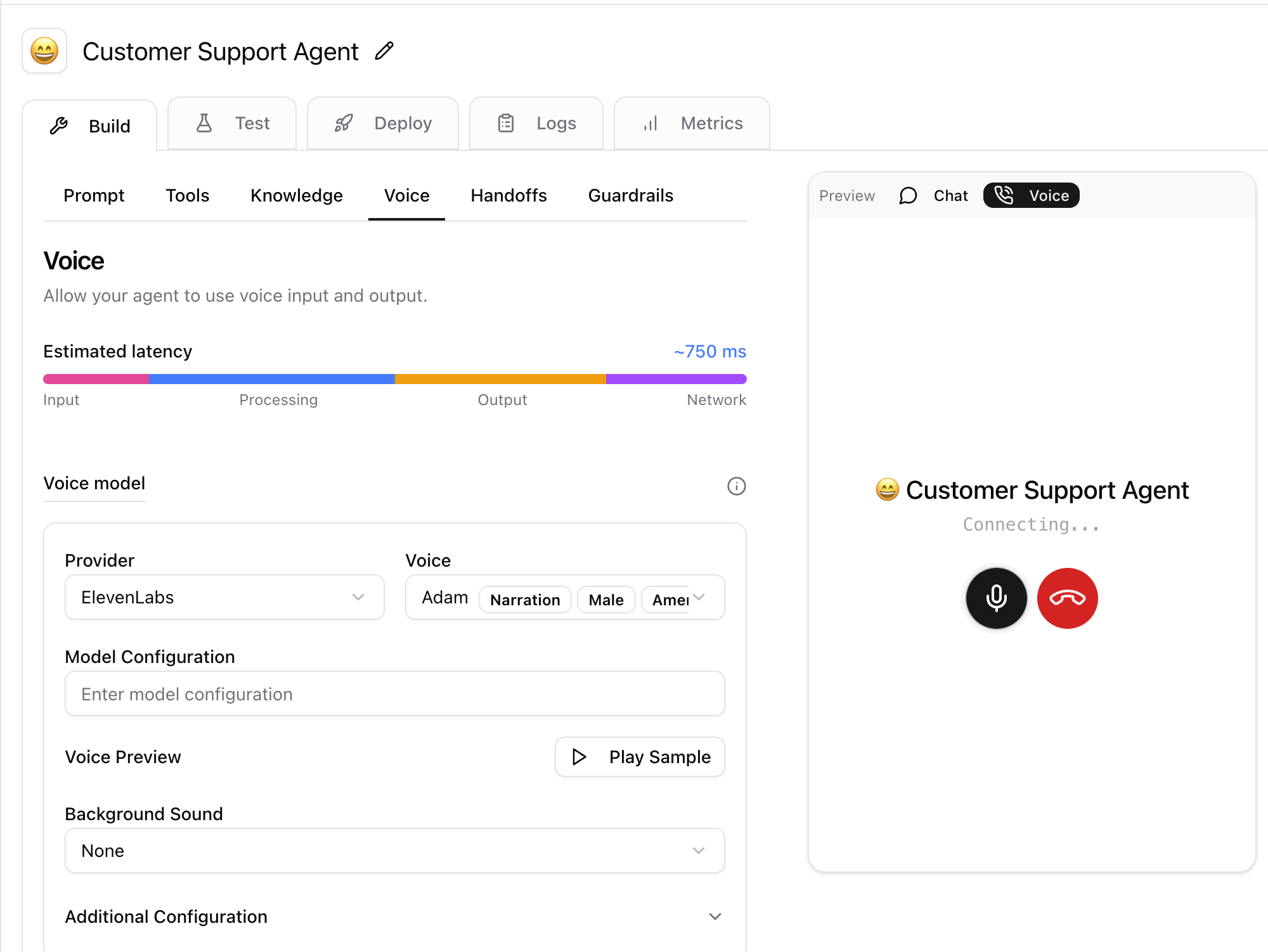

Voice

Your agents can speak! Seriously. To try it out, just click the “Voice” tab and the “Voice” preview” on the right. You can simulate a conversation with the agent and hear how it sounds. You can configure the agent’s voice, the transcription settings, interruption settings, and more from the Voice tab.

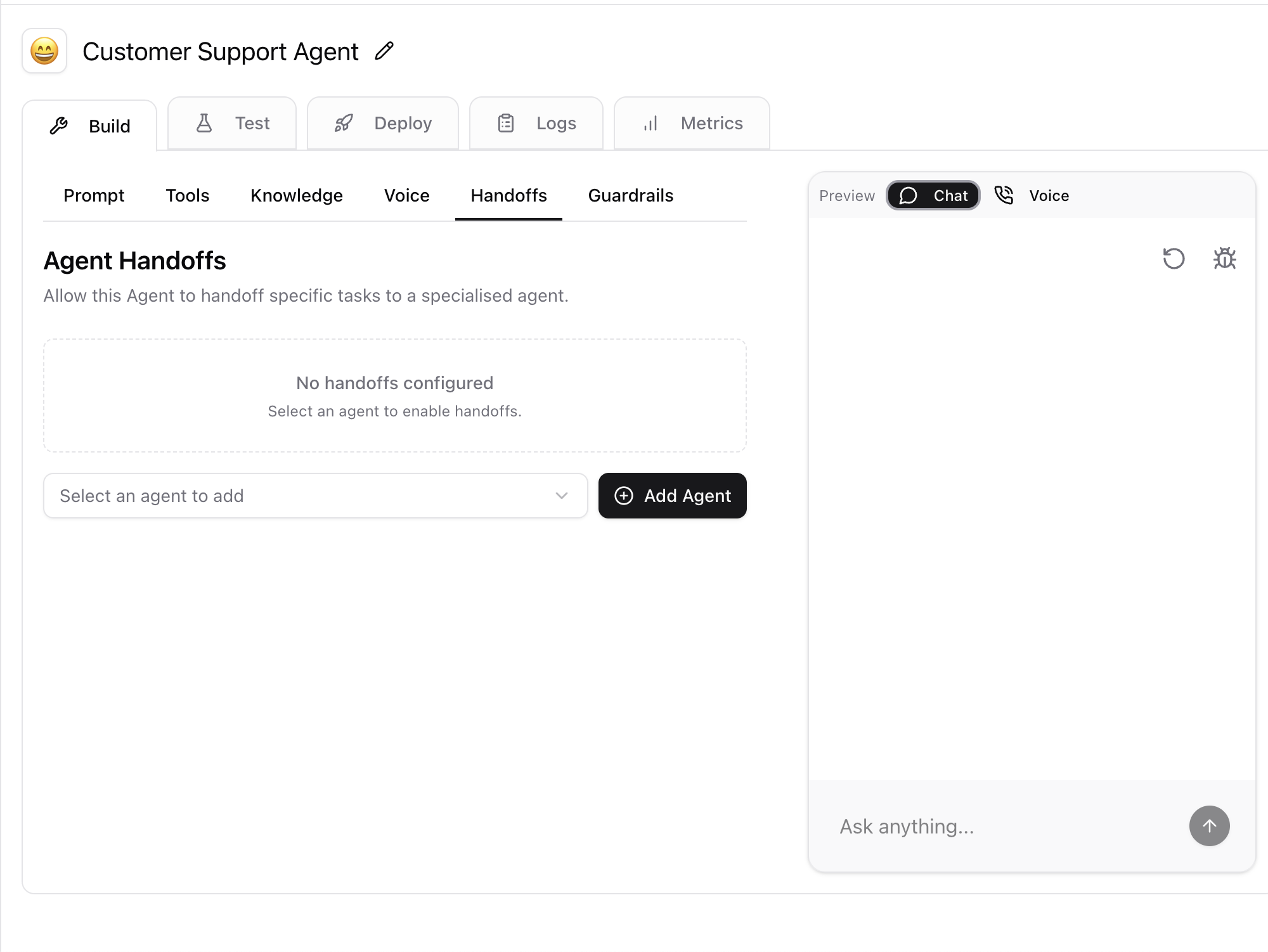

Handoffs

Handoffs allow agents to transfer the conversation to a human. For instance, you may instruct the agent to handoff if the user asks a question that is beyond its knowledge or when the conversation is more complex than the agent can handle. You can also handoff to another agent. For instance, you might have one agent that handles all customer support requests and another agent that handles all sales requests. If the customer support agent gets a sales question, it can handoff to the sales agent.

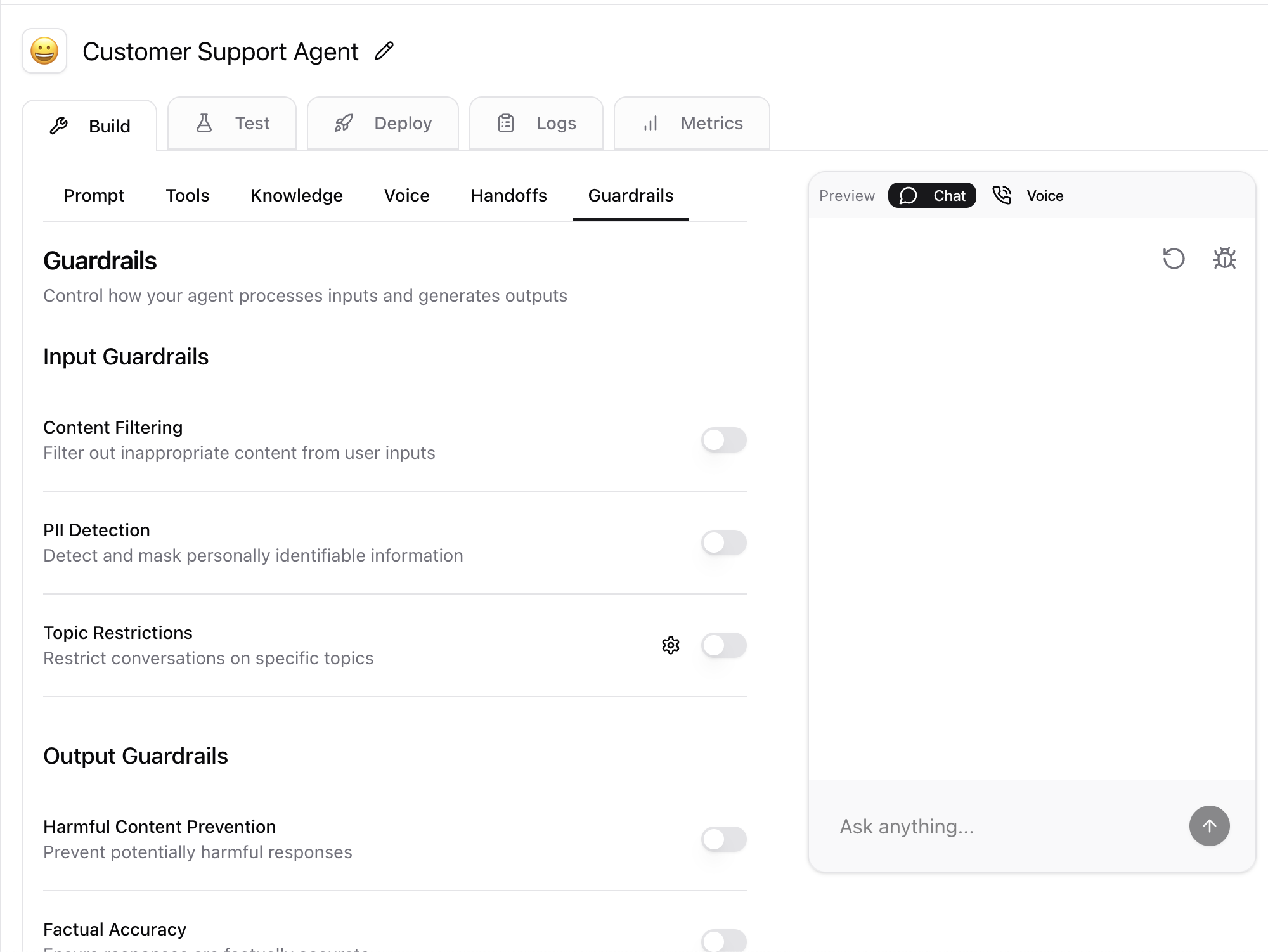

Guardrails

Guardrails allow you to define security, privacy, and other core rules for the agent. These rules are defined on inputs (e.g. what users send to the agent) and outputs (e.g. what the agent sends to the user).